Want to wade into the sandy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this.)

Article posted here just for the line: “AI-made material is a waste product: flimsy, shoddy, disposable, a single-use plastic of the mind”

Oh hey, bay area techfash enthusing about AI and genocidal authoritarians? Must be a day ending in a Y. Today it is Vercel CEO and next.js dev Guillermo Rauch

https://nitter.net/rauchg/status/1972669025525158031

image description

A screenshot of a tweet by Guillermo Rauch, the CEO of Vercel. There’s a photograph of him next to Netanyahu. The tweet reads:

Enjoyed my discussion with PM Netanyahu on how Al education and literacy will keep our free societies ahead. We spoke about Al empowering everyone to build software and the importance of ensuring it serves quality and progress. Optimistic for peace, safety, and greatness for Israel and its neighbors.

I also have strong opinions about not using next.js or vercel (and server-side javascript in general is a bit of a car crash) but even if you thought it was great you should probably have a look around for alternatives. Just not ruby on rails, perhaps.

Amazing how they all went from oh us poor nerds vs the jocks to bending the knee and licking the boots of the strongmen. see also YT bribing Trump.

pushy rationalist tried to glom onto and fly to meet my niche internet microcelebrity friend & i talked her through setting boundaries instead of installing this person in her life. my good deed for the week

AI video generation use case: hallucinatory RETVRN clips about the good old days, such as, uh, walmart 20 years ago?

It his the uncanny valley triggers quite hard. It’s faintly unsettling t watch at all, but every individual detail is just wrong and dreamlike in a bad way.

Also, weird scenery clipping, just like real kids did back in the day!

https://bsky.app/profile/mugrimm.bsky.social/post/3lzy77zydrc2q

genuinely think nostalgia might be the most purely evil emotion, and every one of these RETVRN ai videos i see strengthens that belief

It is a literal gateway to fascism imho, esp when people get into nostalgia for a time that never was.

And compared to nostalgia for mom n pop stores, this even is nostalgia for a mass produced product.

hallucinatory RETVRN clips about the good old days

Nostalgiabait is the slopgens’ specialty - being utterly incapable of creating anything new isn’t an issue if you’re trying to fabricate an idealis-

such as, uh, walmart 20 years ago?

Okay, stop everything, who the actual fuck would be nostalgic for going to a fucking Wal-Mart? I’ve got zero nostalgia for ASDA or any other British big-box hellscape like it, what the fuck’s so different across the pond?

(Even from a “making nostalgiabait” angle, something like, say, McDonalds would be a much better choice - unlike Wal-Mart, McD’s directly targets kids with their advertising, all-but guaranteeing you’ve got fuzzy childhood memories to take advantage of.)

I will say that the flipping between characters in order to disguise the fact that longer clips are impractical to render is a neat trick and fits well into the advert-like design, but rewatching it just really reinforces how much those kids look like something pretending real hard to be a human.

Also, fake old-celluloid-film filter for something that was supposed to be from 20 years ago? Really?

Also, fake old-celluloid-film filter for something that was supposed to be from 20 years ago? Really?

I was gonna say that was probably the slop extruder’s doing, but it looks to have been applied manually for some godforsaken reason. Best guess is whoever was behind this audiovisual extrusion thought “celluloid filter = Nostalgiatm”.

deleted by creator

New Ed Zitron to start the week off: The Case Against Generative AI

At the risk of being critical of Zitron, I have some comments. This is probably just nitpicking but regardless.

[…] a technology called Large Language Models (LLMs), which can also be used to generate images, video and computer code.

LLMs cannot be used to generate images or videos. Diffusion models can create images, but that’s not a text-generation model. I guess you could use an LLM to prompt an image or video generation model, but I’m not sure if that’s what he meant or not.

Large Language Models require entire clusters of servers connected with high-speed networking, all containing this thing called a GPU — graphics processing units.

Sort of, but not really. GPT-5 with its (presumably) trillions of parameters and its (apparently) hundreds of millions of users per month needs a lot of throughput to cater to that, but there’s nothing about LLMs that inherently requires massive GPU clusters with high-speed networking.

Here’s a LLM running on a Raspberry Pi

Of course, the amount of people running LLMs on Raspberry Pis is effectively that guy in the video to show a LLM running on a Raspberry Pi, and it’s not like it’s particularily fast without adding a GPU (and at the end of the day it’s still LLM output, so), so perhaps he’s just using “Large Language Models” as in “The LLMs that the vast majority of people actually use.”

He’s not wrong about training, however.

IMO it’s not a particularly good start to his newsletter. Because an easy counter to his statement is that not all LLMs require massive amounts of compute to run, but a counter against that counter is that training even smaller LLMs still require vast amounts of compute that the average person doesn’t have, in addition to the copyrighted material needed to train on, even with the win that Anthropic got meaning that any LLM trained in the future is going to require vast amounts of capitol for just the training data alone. The problem is that he doesn’t state any of that. Maybe he does know about that and decided to omit it for brevity. If he did, then, personally, I think that’s a mistake. Or maybe I’m just not reading it properly.

The first paragraph immediately conflating all of generative AI with LLMs doesn’t particularly help his case either, even though stating that there are multiple types of generative AI wouldn’t really harm his thesis that this entire thing is a massive bubble. Again, perhaps he’s doing it for a reason that I’m not getting.

AI Shovelware: One Month Later by Mike Judge

The fact that we’re not seeing this gold rush behavior tells you everything. Either the productivity gains aren’t real, or every tech executive in Silicon Valley has suddenly forgotten how capitalism works.

… por que no los dos …

Or, this is how capitalism has always worked. See Enron for example. And we all just got so enthralled by the number (praised be its rise) that we took the guardrails off. The rising tidal wave which will flood all the land, raises all boats after all.

The goal of capitalism is not to produce goods, it is to create value for the owners of the capital. See also why techbros are turning on EA and EA (which EA is which, is left as an exercise to the reader).

Disappointed this wasn’t Beavis & Butt-head/King of the Hill/Office Space/Idiocracy/Silicon Valley Mike Judge

Microsoft launches ‘vibe working’ in Excel and Word

A new Office Agent in Copilot chat, powered by Anthropic models, is also launching today that can create PowerPoint presentations and Word documents from a “vibe working” chatbot.

McKinsey about to slash it’s own headcount after slashing everyone else’s

Microsoft says its Agent Mode in Excel has an accuracy rate of 57.2 percent in SpreadsheetBench, a benchmark for evaluating an AI model’s ability to edit real world spreadsheets.

They are openly admitting this? Do they really not realize how completely damning the number is…?

Dont worry about the number it will improve after the singularity.

https://scottaaronson.blog/?p=9183

Quantum scoot is quantum spooked 😱 after GPT-5 manages to solve a subproblem for him (after multiple attempts), thanks the powers that be for his tenure!

… even though GPT-5 probably generates the answer via websearch

After seeing this, I reminded myself that I’ve seen this type of thing happen before. Over the past half year, so many programmers enthusiastically embraced vibe coding after seeing one or two impressive results when trying it out for themselves. We all know how that is going right now. Baldur Bjarnason had some great essays (1, 2) about the dangers of relying on self-experimentation when judging something, especially if you’re already predisposed into believing it. It’s like a mark believing in a psychic after he throws out a couple dozen vague statements and the last one happens to match with something meaningful, after the mark interprets it for him.

Edit: Accidentally hit reply too early.

You think he would maybe, idk, search around to see if this was a known formula before making such a bombastic statement…

Funny how when there is something novel it always a) already existed in the training data or b) doesn’t actually seem to work.

Oh yeah, he wrote an update saying that the LLM is still great, even if the result is already known, because it saves him time. We have come full circle back to the exact same value proposition as the vibe coders.

The US economy is 100% on coyote time.

It wouldn’t matter if everyone came to their senses today. All the money that’s been invested into AI is gone. It has been turned into heat and swiftly-depreciating assets and can never be recouped.

It’s surreal isn’t it?

Check out this epic cope from an Anthropic employee desperately trying to convince himself and others that actually LLMs are getting exponentially better

https://www.julian.ac/blog/2025/09/27/failing-to-understand-the-exponential-again/

Includes screenshots of data where he really really hopes you don’t look at the source, and links to AI 2027.

I took a quick peek at his blog.

Oh dear, there is a dedicated rationality subsection…

Oh lol, I thought his name sounded familiar and yup, he was a concern troll in a Hackerspace I was in, some 12 years ago.

Oh god, he unironically recommends reading the sequences wtf 🤢🤮

Surprise level: zero

Great response^

I think Julian is going to be mildly surprised that METR’s chart keeps going up, and yet, will have relatively small effect on the majority of swe roles.

At the same time, he did create alphaZero so he has a big old noggin! I wonder, after his success at Go, was he swept up in the mania that we would quickly translate that success to create super duper ai?

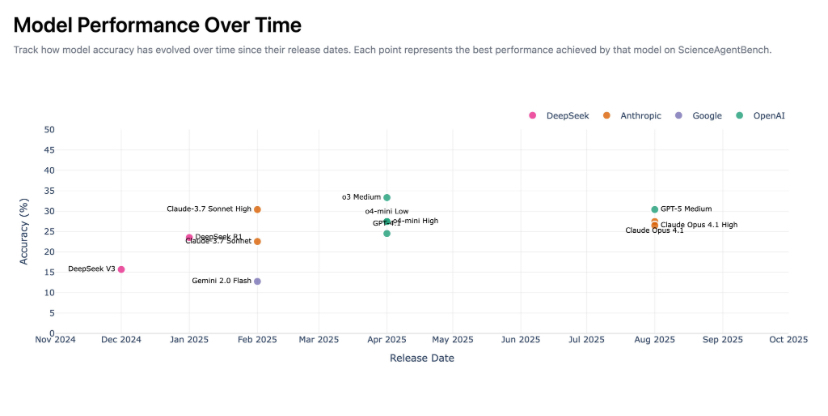

Links to the METR tasks w/ massive error bars at 50% level lmaou.

Someone in the comments rightly points out the comparison with covid isn’t apt. With covid, underlying mechanism caused an exponential effect in covid’s spread

With LLMs the exponential trend is being caused by exponentially spending money and a healthy dose of targeting benchmarks, which is why people are calling the top. The money literally doesn’t exist for this shit to go on so you can create your 50% accurate mechanical turk.

Edit: idk the more I think about this the more it irks me. Like if I was allowed to pick and choose benchmarks that agree with my biases I would post something like this…

… and claim model performance is actually getting worse over time.

The second screenshot goes to a chart where the Y axis is labelled

Task duration (for humans) where logistic regression of our data predicts the AI has a 50% chance of succeeding

So they’re just extrapolating an exponential, not actually measuring it.

links to AI 2027.

In Dutch we have a saying (from a commercial, well done on the advertisers there) ‘Wij van Wc-eend adviseren Wc-eend’ (we from the company Wc-eend, suggest you get Wc-eend), which seems appropriate here. It is used in a sarcastic context when somebody gives advice with a clear conflict of interest.

Anyway, just going from the title, ‘X is exponential’ has been the pro AI cry since the singularity is near. (Which said, well individual tech follows an S-curve, but all the techs combined are exponential, and variants on that). All seems very hopeium, immortality is near!

Not sure if this was posted before

“There is no such thing as sex,” Vox says from his lotus position, his eyes closed in religious ecstasy, “only the One Mind jerking itself off.”

I only got through 3 paragraphs, that was plenty

seems like armin ronacher (originally of the flask, jinja & co fame) has also fallen into the vibecoding rabbit hole; which is generally a pity.

a good thing that the pallets projects are now independent from him (and if you build python clis, plubum is anyway much, much better than click).

He’s been on the vibecoding bandwagon for quite some time on lobste.rs.

indeed, but that “90% of my code is now from ai” piece is even sadder than the rest.

Opening the sack with this shit that spawned in front of me:

Guess it won’t be true AGI!

wait, how much compute would they need for this, ignore patent absurdity of it all for a minute? would they wrap it up under 1 quadrillion dollars?

maybe 2, for good measure

Pigs will be a true method of space exploration if they can fly to Mars in 1 hour.

On top of everything else, “the father of quantum computing” is such lazy writing. People were thinking about it before Deutsch, going back at least to Paul Benioff in 1979. Charlie Bennett and Giles Brassard’s proposal for quantum key distribution predates Deutsch’s quantum Turing machine… No one person should be called “the father of” a subject that had so many crucial contributors in such a short period of time.

Also, chalk up another win for the “billionaires want you to think they are physicists” hypothesis. It’s perhaps not as dependable as pedocon theory, but it’s putting in a strong showing.

the father of quantum computing agrees

And then you read the article and he is basically just saying big if true.

So as historically new versions are made about every ~2 years. This means we are 6 years out.

GPT-8: The Ocho

Now trained with comprehensive coverage of top-fuel lawnmower racing and timbersports

I hear GPT-8 will broadcast a dyson sphere circumnavigation race